Prolixium Communications Network: Difference between revisions

No edit summary |

|||

| Line 15: | Line 15: | ||

** [[e]].prolixium.com | ** [[e]].prolixium.com | ||

** [[einstein]].prolixium.com | ** [[einstein]].prolixium.com | ||

* [[Sarasota, FL]] (srq): [[scimitar]].prolixium.com on HFC via [[Comcast]] | * [[Sarasota, FL]] (srq): [[scimitar]].prolixium.com on HFC via [[Comcast]] | ||

* [[Carteret, NJ]] (ewr): [[tachyon]].prolixium.com on HFC via Comcast | * [[Carteret, NJ]] (ewr): [[tachyon]].prolixium.com on HFC via Comcast | ||

Revision as of 04:58, 1 December 2014

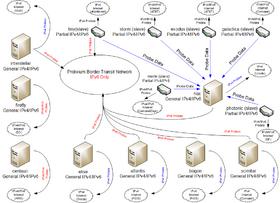

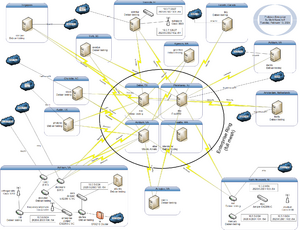

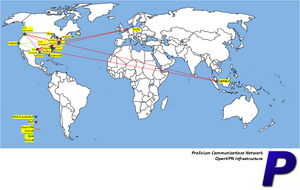

The Prolixium Communications Network (known also as PCN, mynet, My Network, and Prolixium .NET) is a collection of small, geographically disperse, computer networks that provide IPv4 and IPv6, VPN, and VoIP services to the Kamichoff family. Owned and operated solely by Mark Kamichoff, PCN often serves as a testbed for various network experiments. The majority of the PCN nodes are connected via residential data services (cable modem), while some located in data centers have Fast Ethernet connections to the Internet.

Current State

Overview

As of October 13, 2014, PCN is composed of several networks along the east coast of the United States, connected via OpenVPN and 6in4 tunnels:

- North Brunswick, NJ (trx): nat.prolixium.com on HFC via Verizon FiOS

- New York, NY (jfk): dax.prolixium.com on Gigabit Ethernet via Internap

- Toronto, Canada (yyz): tiny.prolixium.com on Gigabit Ethernet via atlantic.net

- Atlanta, GA (atl): nox.prolixium.com on Fast Ethernet via Sago Networks

- Seattle, WA (sea) on HFC via Comcast Business

- Sarasota, FL (srq): scimitar.prolixium.com on HFC via Comcast

- Carteret, NJ (ewr): tachyon.prolixium.com on HFC via Comcast

- Moscow, Russia (svo): firefly.prolixium.com on Gigabit Ethernet via VDS6

- Singapore (sin): centauri.prolixium.com on Gigabit Ethernet via Amazon EC2

Each site has multiple (network is almost fully-meshed) OpenVPN tunnels to other locations, each with a 6in4 tunnel inside, providing both IPv4 and IPv6 communications with data protection and security. Quagga's ospfd, ospf6d, and bgpd are used in the production network (the term production is relative) on commodity PC hardware, while the Seattle site also utilizes Juniper SRX and SSG firewalls.

Routing

The routing infrastructure consists of several autonomous systems, taken from the IANA-allocated private range: 64512 through 65534. Each site runs IBGP, possibly with a route reflector, and its own IGP for local next-hop resolution. EBGP is used between sites and peering connections. IPv4 Internet connectivity for each site is achieved by advertisement of default routes from machines performing NAT. The lab is connected to starfire in Charlotte (cha). The PCN used to use one large OSPF area: no EGP. It was converted to a BGP confederation setup, then reconverted to its current state.

IPv6 Connectivity

IPv6 connectivity is provided by Internap in New York, NY, via dax.prolixium.com. The IPv6 default route (is learned by the rest of the network via BGP on dax.prolixium.com. dax.prolixium.com runs OpenBSD's pf, which regulates inbound and outbound IPv6 traffic, through stateful inspection.

firefly also has native IPv6 connectivity, but does not have any prefixes routed toward it.

DNS

DNS is done with two views: internal and external. PCN has two external nameservers, and four internal ones, all which perform zone transfers from the master nameserver, ns3.antiderivative.net. antiderivative.net is used for all NS records, as well as glue records at the GTLD servers. The internal nameservers are ns{1-4} and external ones are ns{2,3}. Each zone has two views, internal and external, and a common file that is included in both views (SOA, etc.). The zones include the following:

- Internal view, answering to 10/8, 172.16/12, and 192.168/16 addresses

- 3.10.in-addr.arpa. and 3.16.172.in-addr.arpa. reverse zones

- prolixium.com, prolixium.net, antiderivative.net, etc.'s internal A/CNAME records

- External view, answering to everything !RFC1918

- prolixium.com, prolixium.net, antiderivative.net, etc.'s external A/CNAME records

- Common information, answering for all hosts

- 180/30.189.9.69.in-addr.arpa., 232/29.186.9.69.in-addr.arpa, 2.0.0.0.1.0.0.0.8.c.8.4.1.0.0.2.ip6.arpa., and other reverse zones

- prolixium.com, prolixium.net, antiderivative.net, etc.'s common MX records

Previously, the Xicada DNS Service (developed by Mark Kamichoff) kept track of all the forward delegations as well as IPv4 reverse delegations on Xicada. The administrator of each node enumerated their zones into a web form, and then configured their DNS server to pull down a forwarders definition for all Xicada zones. It supported BIND and djbdns, but also outputted a CSV file if someone decided to use another DNS server. It was originally intended that each DNS server should pull down a fesh copy of the forwarders definition file nightly, but there were really no rules.

Mark Kamichoff has a policy on his network to have DNS entries (includes A, AAAA, and PTR) for each and every active IP address. If a host is offline, the DNS records should be immediately expunged. This precludes the requirement of a host management system or a collection of poorly-maintained spreadsheets. If an IP is needed, the PTR should be checked. All DHCP-assigned IP addresses are created via {side ID}-{lastoctet}.prolixium.com. Again, no confusion. DNS itself is a database, so why not use it?

All transit links on PCN are addressed using the prolixium.net domain. The format is {unit/VLAN}.{interface}.{host}.prolixium.net. For example, the xl1 interface on starfire would be: xl1.starfire.prolixium.net. There is a collection of DNS entries for every IPv4 and IPv6 transit link. There is not one hop in my network which has no PTR record (or a PTR record w/out a corresponding A or AAAA record). Each router has a loopback interface with IPv4 and IPv6 addresses (if supported).

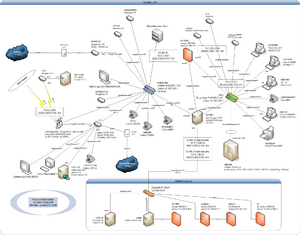

Seattle-Specific Setup

The network setup in Seattle (formerly Charlotte) is slightly different from the other sites, where there is one router, and all Internet and WAN traffic leaves through that host. Comcast Business provides a static prefix (50.248.192.200/29), which has the following assignments:

- Juniper Networks SRX100 (einstein)

- Juniper Networks SSG 20 Wireless (e)

- Cisco ASA 5505 (hubris)

- Linux router (starfire)

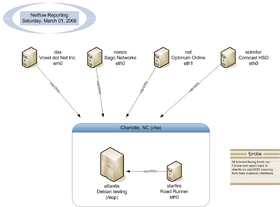

starfire is the core router with 5x Gigabit Ethernet interfaces. VPN traffic leaves starfire, but all non-RFC 1918 traffic is balanced via L4 protocols between the two firewalls. In the past, NetFlow was used on atlantis, which was depicted in the drawing below:

Previously, the NetFlow collector ran ntop, but this was uninstalled due to instability.

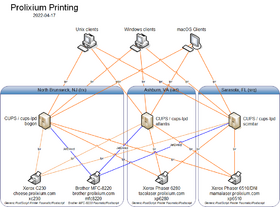

Printing

The whole printing/CUPS/lpd setup is mostly an annoyance. Most people would want to run CUPS on every Unix client on the network. Mark Kamichoff believes it's better to have a lightweight client send a PostScript file via lpd to a CUPS server rather than sending a huge RAW raster stream across the network and have both the client and server do print processing. See the diagram to the bottom:

SmokePing

For monitoring, PCN uses a combination of Nagios, SmokePing, and MRTG. The SmokePing setup itself is a combination of slaves and masters, both IPv4 and IPv6.

nox is the master for two slaves:

- tachyon - Soekris box connected to Comcast

- evolution - ALIX box connected to Verizon Wireless

- tiny - VPS connected to Peer1

History

- Warning: This entire section is written in the first-person (Mark Kamichoff's) point of view

Beginnings

After joining the [Xicada network back at RPI, I decided to continue linking all of my networks and sites together via various VPN technologies. At first, the network was just a simple VPN between my network at home and a few computers in my dorm room at RPI. The connection tunnelled through RPI's firewall like a knife through warm butter, using OpenVPN's UDP encapsulation mode. Actually, a site to site UDP tunnel was the only thing OpenVPN offered, back then. My router at RPI was a blazing-fast Pentium 166MHz box running Debian GNU/Linux. At that point, my Xicada tunnels were terminated on another box I found in the trash, an old AMD K6-300, which eventually ran FreeBSD 4.

The network quickly started expanding, and I was able to move the K6-300 box (starfire) into the ACM's lab, which was given a 100mbit link, in the basement of the DCC. At this point in time, my network had three sites: home, the lab, and my dorm room. Since I didn't stick around RPI during most summers, I reterminated the Xicada links on starfire, since it sported a more permanent link.

Shortly after starfire was moved to the lab, I started toying with IPv6, and acquired a tunnel via Freenet6 (now Hexago, since they're actually trying to sell products, or something). RPI's firewall wouldn't allow IP protocol 41 through the firewall, and my attempts at getting this opened up for my IP failed. So, I terminated the IPv6 tunnel on my box at home, which sat on Optimum Online. Freenet6 gave me a /48 block out of the 3ffe::/16 6bone space, and I started distributing /64's out to all of my LAN segments. I started running Zebra's OSPFv3 daemon, and realized it was buggy as all get out. It mostly worked, though. Since Freenet6 gave me an ip6.int. delegation, I spent some time applying tons of patches to djbdns, my DNS server of choice, back then. After tons of patching, I got IPv6 support, which was fairly neat at the time. What did I use this new-found IPv6 connectivity for? IRC and web site hosting. www.prolixium.com has had an AAAA record since at least 2003.

Sometime in 2003 (I forget when), I moved my IPv6 tunnel to BTExact, British Telecom's free tunnel broker that actually gave out non-6bone /48's and ip6.arpa. DNS delegations. I quickly moved to them, and enjoyed quicker speeds than Freenet6 for about a year. Of course, after a year, my parents had a power outage at home, and my server lost the IP it had with OOL for the past two years. BTExact, at that time, had frozen their tunnel broker service, and didn't allow any modifications or new tunnels to be created. I went back to Freenet6, who had changed to 2001::/16 space.

After leaving RPI, and getting a job, I decided to purchase a dedicated server from SagoNet. I extended my network down to Tampa, FL, where the server was located.

Fast-forwarding to the present day, I currently have six sites, and native IPv6 from Voxel dot Net. Almost every host on the network is IPv6-aware, and the IPv6 connectivity is controlled completely by pf.

Xicada connectivity at this point has been terminated, due to lack of interest.

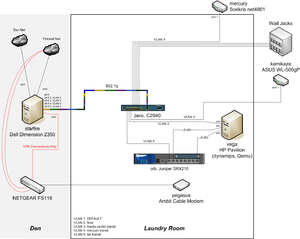

VLAN Conversion (Laundry Room Data Center)

I'm lucky to have CAT5(e?) cabled to every room in my condo, all aggregated in the laundry room, I figured it was time to deploy a couple different VLANs on my network. Initially, I just had a dumb switch connecting all of the various ports in different rooms together. Since that was too simple of a solution, I picked up a Cisco 2940 switch on eBay, and setup a 1Gbit trunk between starfire and the laundry room. I setup 4x VLANs:

- 2: Various wall jacks

- 3: Media center link (connected to kamikaze)

- 4: Linksys link (connected to mercury)

- 5: Lab link (connected to hysteresis)

I ended up throwing some other gear in the laundry room along with the switch, and ended up moving my lab (3.0) there.

BGP (Confederations) Conversion

History

Starting with the Xicada project, my network was one big OSPF backbone area. Entirely flat, except for some route redistribution for the lab connection. When I added OSPFv3 for IPv6 reachability, it was no different - one big area: no stub areas, no frills. It worked, but was boring, and didn't provide the flexibility required if I wanted to start redirecting Internet traffic.

After reading up on BGP, I realized I could make my network 1000% more complex, while gaining some real-world experience. Sounds like a plan, huh? Preparation and Design

Due to some Quagga instability issues, I originally tested out some alternate BGP/OSPF implementations, including XORP. Unfortunately, none of them fit the bill, and XORP, although promising, was horribly unstable and appeared to suffer from configuration file parsing issues, more than anything else. So I decided to stick with Quagga. I also decided to keep two separate BGP connections, one for IPv4 and one for IPv6 (so I didn't run into any nasty next-hop accessibility problems).

One of the goals of the redesign was to eliminate the large network-wide IGP process and break down each site into sub-ASes, using BGP confederations and route reflectors. This required a partial mesh of CBGP (confederation BGP - like EBGP, but more attributes are retained) between all the sites, to take advantage of the tunnels. Unfortunately, this meant that I had to renumber all of my IPv6 tunnels, since they were all /128's. Not a big deal. I didn't want to do this with the IPv4 (OpenVPN) tunnels, since the documentation strongly recommended against the use of anything other than a 32-bit netmask. This required the use of the ebgp-multihop command, since according to most [E]BGP implementations, /32's or /128's connecting to each other is not classified as 'directly connected' for some reason. (doesn't make sense to me, since even a TTL of 1 should theoretically allow communication to succeed)

At each site, I wanted to run IBGP internally, and designate one box to be the route reflector, in order to loosen the IBGP full-mesh requirement. Some of the OpenWrt devices did not have loopbacks at the time, so I needed to shuffle around some addresses and fix this.

I'd still run an IGP internal to each site (not nox or dax, since they are only one router), and advertise a default route via OSPFv2 within the site, for Internet access. I could also advertise default routes from two different routers within a site, for redundancy and failover Internet access.

So, here's some of the tasks I performed prior to making any routing changes:

- Add loopbacks to all routers

- Redo all IPv6 tunnel interfaces, converted to /126's to avoid subnet-router anycast issues

- Redo tunnel naming standards (was too long before)

IPv6 Migration

I figured, since on most platforms, IGP routes take precedence over BGP routes, I could add all the peering relationships and get everything setup without skipping a beat. Quagga's zebra process wouldn't insert or remove anything from the FIB (the kernel routing table). Then I could remove OSPFv3 from all the WAN links, and zebra would just shuffle around the routes, but reachability would come back within a few minutes, maybe?

So I started building the BGP neighbors, and quickly ran into a problem. For some reason, no IPv6 BGP routes were being sent to other peers from Quagga's bgpd. I posted a message to the mailing list, and quickly got a helpful response. Apparently I was hitting a bug that's been in Quagga for awhile (typo) that dealt with the address-family negotiation between peers. The quick fix was to add 'override-capability' to each neighbor (or peer group) and it would accept all advertised address families.

After all the peers were setup, I disabled OSPFv3 on all the WAN links, and everything reconverged... oddly. It looked like BGP was doing path-selection based on tiebreakers, and picking the higher peer address as the best path for a destination, even if it meant not utilizing the directly connected link. After scratching my head for a few minutes, I realized my stupidity. Normal BGP treats AS_CONFED_SEQUENCE and AS_CONFED_SET as a length of one, so all paths through my network looked like they had an AS path length of *1*. Luckily, Quagga had a nice bgp bestpath as-path confed command that modified the path selection algorithm, and gave me what I wanted. I described this a blog entry.

Since I wanted all loopbacks and transit interfaces reachable from anywhere, I added a ton of network statements to bgpd. It felt like a hack, but isn't too bad, since there's really no other way of doing it, without using a network-wide IGP.

IPv4 Migration

Since the IPv6 migration was successful, I figured the IPv4 migration would turn out the same - and it did, mostly.

I started setting up the IPv4 BGP neighbors, and ran into a strange issue with ScreenOS. I've documented it here. Basically, my two Juniper firewalls wouldn't establish IBGP connections unless they were configured as passive neighbors (wait for a connection).

After all the IPv4 BGP connections were up and running, I killed the network-wide IGP process entirely (shut off ospfd/ospf6d on dax and nox), and let everything reconverge. It worked out of the box - success!

I removed the static default routes on my OpenWrt routers, and advertised defaults at each site. No problem there.

Finish

Although I ran into a number of problems, and probably complicated troubleshooting of my network by an order of magnitude, I think the conversion was worth it. Now if anyone wants to start Xicada 2.0, we can do it right, this time...

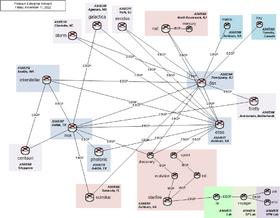

EBGP Conversion

I got sick of confederations, so I just removed the confederation statements and turned all the links into EBGP links. This is how the network currently looks like, from a BGP perspective:

Applications

PCN enables several applications:

- Voice (via SIP / G.711u)

- IPv6

- Streaming audio

Lab

- Main Article: PCN Lab

The PCN lab is Mark Kamichoff's network proving ground and general hacking arena.